Your campaign is underperforming. You can see it in the numbers. But can you see your path to in-flight campaign optimization?

For most brands, the answer is no and that gap is costing them. Most measurement tools are built to tell you what happened, not what to do about it while there’s still time to do something. By the time a post-campaign report lands, the budget is spent and the window to act has closed.

This is the core problem with how the industry treats measurement: as a report card, not an intelligence system. You get a grade. You don’t get a diagnosis.

But a grade isn’t enough when your campaign is still live. Knowing that performance is below target doesn’t tell you whether the problem is your creative rotation, your media placement, or both. And without that distinction, any optimization you make is essentially an expensive guess.

The brands that pull ahead are measuring more and they’re measuring differently. They’re getting specific, actionable signals while campaigns are still in flight — signals that tell them not just that something needs to change, but exactly what to change and where to start.

This is the insight gap MarketCast Total Effect closes.

Why Post-Campaign Measurement Arrives Too Late

The measurement tools most brands rely on were designed to explain the past. They deliver findings after the fact, summarizing what worked long after the campaign has wrapped and the budget has been spent.

And those findings arrive too late to change anything. You can note the results, adjust your thinking for next time, and move on. What you can’t do is act on it now because now has already passed.

This is the gap that quietly erodes campaign performance at scale. Brands know something is off. The signals are there. But without a way to diagnose exactly where the problem lives — creative, media placement, or both — they’re left making optimization decisions based on instinct rather than evidence.

The result? Budgets get shifted in the wrong direction. Creative is swapped when media was the issue, or media is overhauled when the creative was the culprit all along. Good intentions, wrong lever.

The brands that close this gap are working with better information provided when they can do something about it.

Creative vs. Media: Why Siloed Measurement Leads to the Wrong Fix

Creative, media, and outcomes are typically measured in isolation with separate reports, teams, and timelines. Creative teams assess what the ad did. Media teams assess where it ran. And somewhere downstream, an insights team tries to stitch it all together into a finding that’s supposed to mean something to someone.

By the time those threads connect, the campaign is over.

This siloed approach makes it nearly impossible to answer the question that actually matters during a live campaign: “is the problem the creative, the placement, or both?” Without that answer, optimization becomes expensive guesswork.

Today brands stitch together disconnected data from multiple sources, gathering more data and trying to build a coherent picture fast enough for it to still matter. But, what’s needed isn’t more data. It’s connected data across creative performance, media placement, and campaign outcomes, in real time, with a clear line to what must change and why.

This is the diagnostic gap Total Effect closes.

In-Flight Optimization: Two Retailers, Two Root Causes, Two Different Strategies

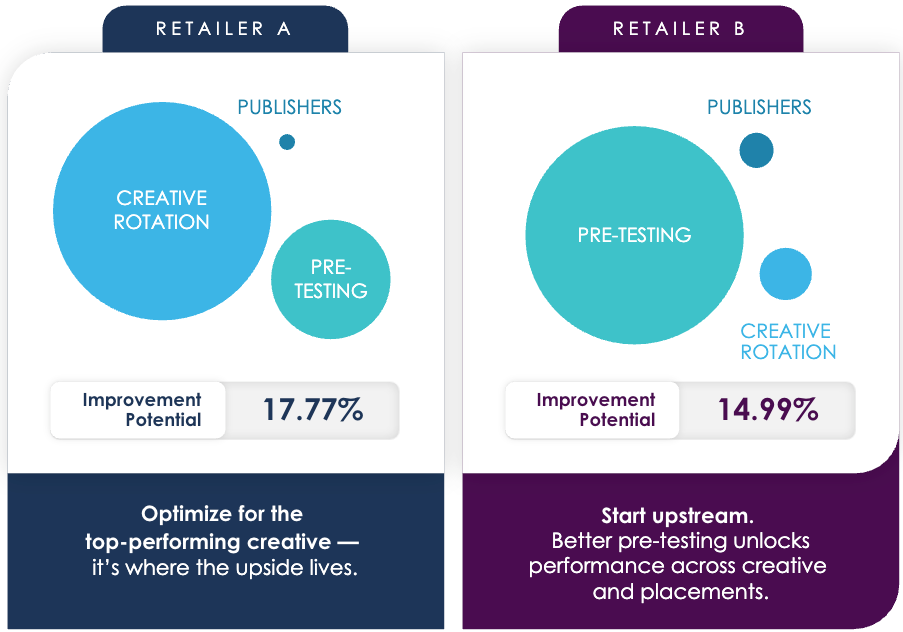

Consider two retailers running campaigns at the same time, measured through the same lens. Both have meaningful room to improve. Retailer A is sitting on 17.77% improvement potential. Retailer B is at 14.99%. Similar scale, similar stakes.

But that’s where the similarity ends.

For Retailer A, Total Effect identified creative rotation as the primary lever. Underperforming creative was in active rotation, spending budget, serving impressions, and dragging down overall campaign effectiveness. The fix was clear: pull what isn’t working, weight toward what is. No media overhaul required. No upstream rethink. Just smarter deployment of creative that was already doing the work.

Knowing which lever to pull matters just as much as knowing you need to optimize.

Retailer B’s picture looked different. Their biggest opportunity wasn’t in rotation — it was upstream. The data pointed to pre-testing as the primary path to improvement, suggesting that stronger creative validation before campaigns go live would improve performance across both creative and placements.

Both retailers had significant room for improvement with completely different diagnoses, leading to two completely different action plans.

This is the insight that changes how brands operate. Without diagnostic precision, both might have chased the same generic fix — shuffling media spend, swapping out creative at random — and left the real opportunity untouched. With it, each team knows exactly where to focus and why.

Same Improvement Potential, Different Levers — Why the Numbers Don’t Tell the Whole Story

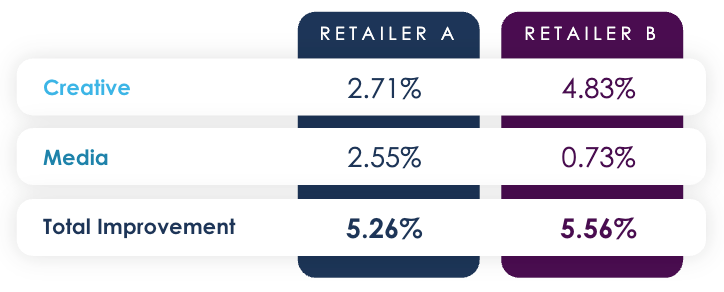

Now consider a second pair of retailers. This time, the improvement potential is nearly identical — 5.26% for Retailer C, 5.56% for Retailer D. On the surface, these two brands look like they’re in the same position, facing the same challenge, needing the same fix.

They’re not.

For Retailer C, Total Effect showed the improvement opportunity was almost evenly split with 2.71% attributable to creative and 2.55% to media placement, leading to a more balanced optimization strategy

A tale of two brands: when in-flight optimization choices diverge.

Total Effect reveals two different paths to get to the same destination.

.

Retailer D tells a different story. Despite a nearly identical total opportunity, 4.83% of their 5.56% improvement potential sits in creative rotation alone. Media placement accounts for just 0.73%. For this brand, a media overhaul would be wasted and possibly even detrimental. Their opportunity lives almost entirely in getting the right creative in front of the right audiences.

These are the insights that generic measurement simply cannot surface. Without the ability to disaggregate improvement potential by lever, in real time, while the campaign is still live, both brands would be guessing. And a guess that moves the wrong lever doesn’t just fail to improve performance. It consumes budget, time, and organizational credibility in the process.

What This Means for Brands

The insight buried in these four examples is about the cost of not knowing which lever to pull. Two brands can have nearly identical improvement potential and need completely different strategies to capture it. Two other brands can face the same root cause, underperforming creative, and still require different fixes.

This is what generic measurement misses. When creative, media, and outcomes are tracked in isolation, the best you can do is identify that something is wrong. You can’t isolate where. You can’t prioritize what to fix first. And you certainly can’t do any of it fast enough to matter while the campaign is still live.

This is the distinction that changes the value insights bring to brands. Showing a finding to a media or brand team is one thing. Demonstrating a finding and what specifically to do about it is something else entirely.

MarketCast Total Effect connects creative performance, media placement, and campaign outcomes into a single diagnostic view, surfacing not just where improvement potential exists, but which lever unlocks it, and how much.

Case Study: See how this big-box retailer could have used predictive intelligence to optimize campaigns in-flight.

Ready to optimize the right thing when it still matters?

Request your personalized demo.